Your customer calls at 9:47 PM. They want to know where their package is. 3 minutes on hold. Then: "Press 1 for shipping, 2 for returns, 3 for...". They hang up. Order returned. Two-star review on Trustpilot.

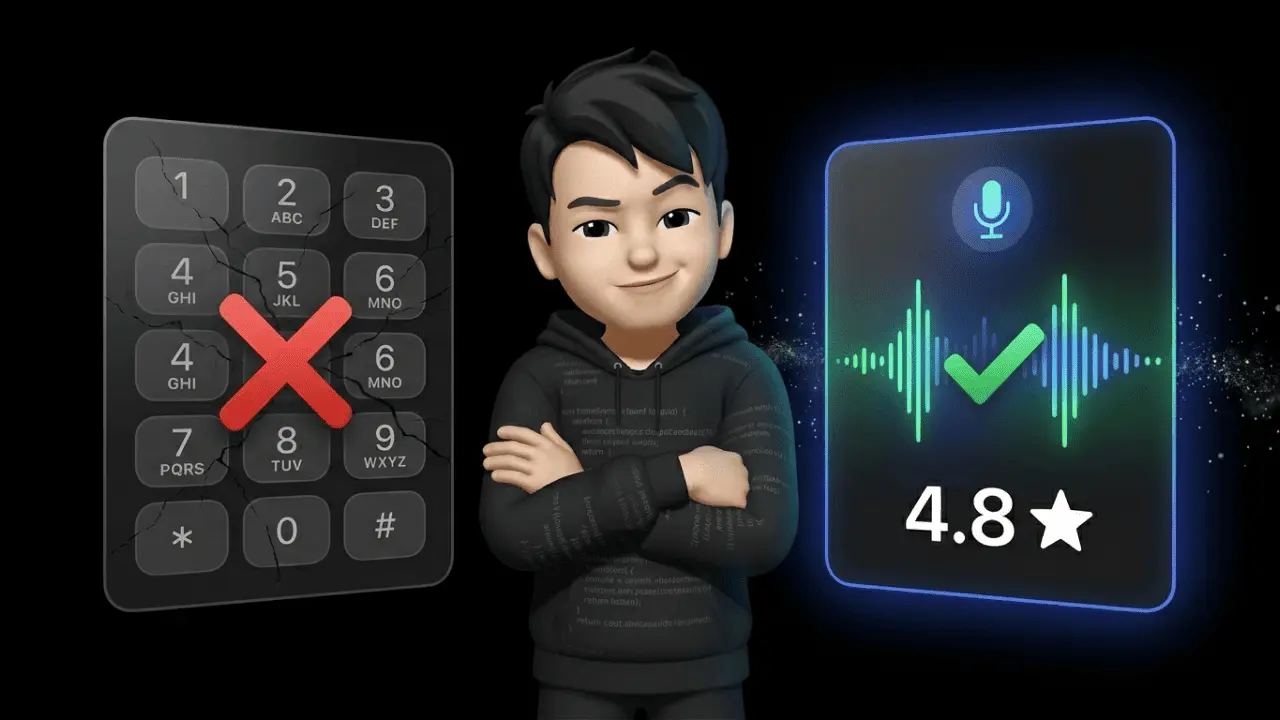

AI Voice Agents solve exactly this problem. They pick up, understand "Where's my package?" on the first attempt, pull the tracking number from your Shopify backend, and respond in under one second. No menu. No hold music. No escalation to a human.

But here's the thing: the technology isn't ready for everyone. And for most e-commerce brands, it's (still) not the first lever to pull. Invest blindly in voice today, you burn budget. Ignore the trend entirely, you miss the biggest shift in customer service since the call center was invented in 1980.

Here's what actually works in 2026 – and where voice falls apart. No hype. Just numbers.

What Are AI Voice Agents?

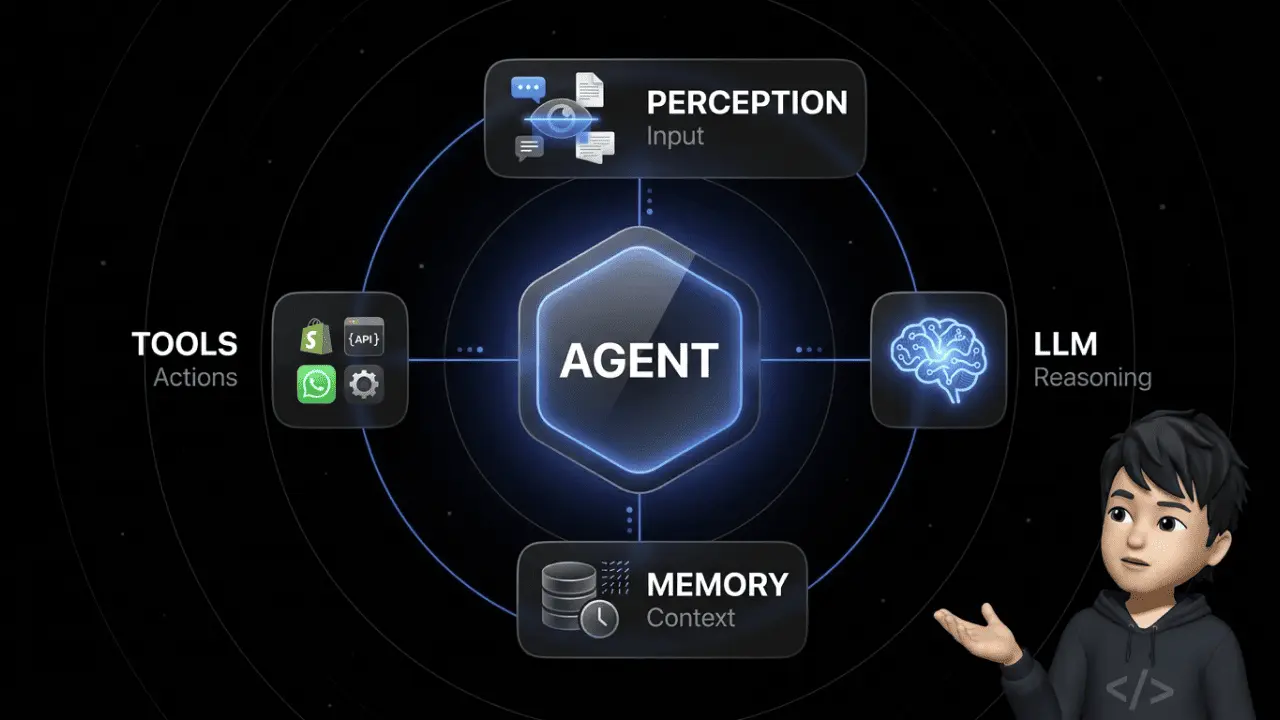

AI Voice Agents are autonomous software systems that conduct natural, real-time phone conversations with humans. They recognize spoken language and understand the caller's intent. They access company data and resolve tasks without human intervention — from order status queries to lead qualification.

The difference to a classic chatbot is the medium: voice instead of text. The difference to traditional IVR ("Press 1") is the intelligence: language comprehension instead of keyword matching. The difference to a human agent is scale: 10,000 parallel calls instead of 100.

What modern voice agents can do simultaneously:

- Listen — even through accents, dialects, background noise

- Understand — even half-finished sentences, slang, mid-conversation topic switches

- Act — cancel orders, book appointments, pull CRM data, escalate tickets

The market is exploding: according to Market.us, the global Voice AI Agents market is growing from $2.4B (2024) to $47.5B by 2034 — at a CAGR of 34.8%. Gartner adds two predictions: first, that conversational AI will reduce contact center labor costs by $80B by 2026 (Gartner press release, 2022). Second, that agentic AI will autonomously resolve around 80% of the most common customer service issues by 2029.

If you're still saying "AI phone calls still sound robotic" — you've been asleep for the past 12 months. Most people can no longer reliably tell whether they're talking to a voice agent or a human in blind tests. Provided the setup is right.

The Technology Behind It: ASR, LLM, and TTS

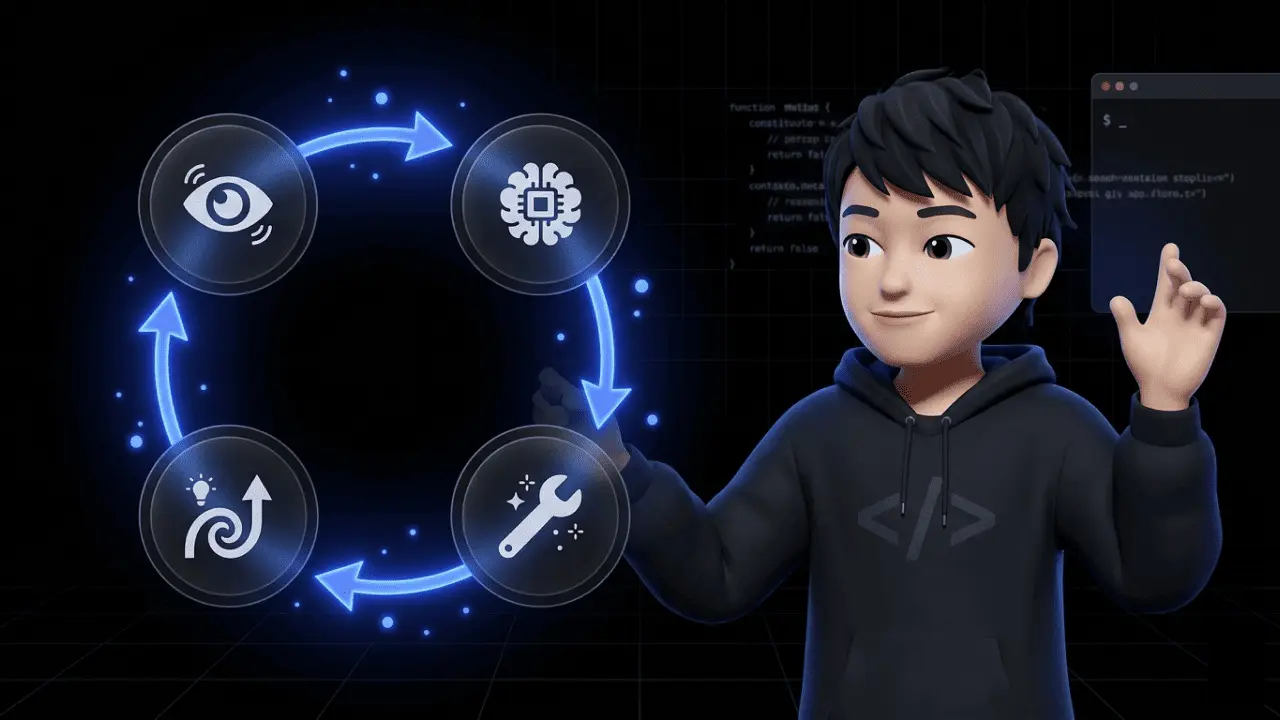

An AI Voice Agent consists of three core components communicating in real time through an orchestration layer. If any one of them is weak, the entire conversation breaks down.

| Component | Function | What It Determines |

|---|---|---|

| ASR (Automatic Speech Recognition) | Converts speech to text | How well the agent understands accents, dialects, noise |

| LLM (Large Language Model) | Understands intent, accesses data, formulates response | How smart and context-aware the agent is |

| TTS (Text-to-Speech) | Converts response back to voice | How natural and emotional the agent sounds |

The pipeline works like this: microphone → ASR converts spoken words to text → LLM interprets, uses RAG (Retrieval-Augmented Generation) to access Shopify data, ticket history, or knowledge base → TTS converts the response back to speech → speaker.

Time is lost at every handoff. This is where the good systems separate from the bad ones. Modern architectures stream each step in parallel rather than processing them sequentially. In practice: while the customer is still speaking, the ASR is already transcribing and feeding partial results to the LLM.

Without this streaming architecture, you're looking at response times of 2 to 4 seconds — which kills any phone conversation.

RAG is the second critical piece. A voice agent without access to your CRM, order database, or returns tool is a glorified answering machine. With access, it becomes first-level support.

And the third often underestimated factor: background noise. In real-world scenarios (street, office, supermarket), noise levels sit between 55 and 65 decibels. Without specialized noise-cancellation models, the ASR's Word Error Rate jumps by 15 to 30%. Providers like Deepgram have specialized exactly in this problem — and the difference in production is massive.

Speech-to-Speech & Affective Computing: The Next Generation

Until now, every voice agent was a relay race with three runners: Audio → ASR → LLM → TTS → Audio. That's called cascading. Every handoff costs time, every step loses information (e.g., tone of voice gets stripped when audio becomes text).

The next generation eliminates this. Speech-to-speech models (end-to-end) process audio directly to audio — no text detour. This pushes latency to under 400 milliseconds and preserves emotions and nuances that get lost in the text step.

Examples: OpenAI's Realtime API, Google's Gemini Live, specialized S2S models. Trade-off: less control over intermediate results, harder debugging, more complex integration. Cascading remains the standard for enterprise setups over the next 12–18 months.

Parallel development: Affective Computing. Top systems analyze not just what is said, but how. Prosody — emphasis, rhythm, volume, pauses — reveals frustration, uncertainty, impatience. The AI adjusts its own tone accordingly.

In practice: caller says "Where the hell is my package?" → system detects anger via prosody → response tone becomes calmer, shorter, more empathetic. This isn't a gimmick — it prevents escalations.

Fair warning: This is the area with the most marketing exaggeration. Not everyone who promises "AI empathy" has real affective computing under the hood. Ask for prosody metrics, or it's just smoke and mirrors.

AI Voice Agents vs. IVR: Why "Press 1" Is Dying

Legacy IVR is a dinosaur. It only understands what you've explicitly programmed. If your customer says "I want to cancel my order" but your menu asks for the order number via keypad — the conversation goes nowhere. The customer presses 0 and demands a human.

Here are the hard differences:

| Criterion | Legacy IVR | AI Voice Agent |

|---|---|---|

| Input | Number keys or fixed keywords | Free speech, full sentences |

| Context | No memory within the call | Remembers everything in the conversation |

| Interruptions | System aborts or ignores | Understands barge-ins, responds to them |

| Topic switching | Menu reset | Seamless mid-sentence switching |

| Tone | Robotic, rigid | Natural, adjustable |

Barge-ins are the underrated factor. Humans interrupt each other constantly — especially when it's clear where the conversation is heading. A good voice agent system recognizes: "The customer is talking again, so I stop speaking and listen."

An IVR can't do that. It plays its announcement through — and your customer gets aggressive.

What this means for e-commerce: If you're still running a pure IVR system with more than 1,000 calls per month, you're burning customer lifetime value. According to a Vonage study, 61% of consumers perceive legacy IVR menus as a poor experience. 63% are specifically frustrated by irrelevant options. And 51% have abandoned a company entirely after landing in an IVR. The abandonment rate for classic IVR setups sits between 20 and 35% depending on the study. AI Voice Agents achieve under 10% in clean deployments. More in our pillar guide on how AI agents work.

The 300-Millisecond Rule: Why Latency Decides Everything

This gets technical — but it's worth it. This one number decides whether your voice agent sounds like a real conversation partner or a call center intern on day one.

Human response time in conversation sits at 200 to 300 milliseconds. Can't go faster — that's neurobiology. Anything over half a second, you notice unconsciously. You get uneasy.

The hard thresholds:

- Under 500 ms latency (end-to-end): Conversation feels natural. This is the target.

- 500–800 ms: Still acceptable. You notice the delay but aren't stressed.

- 800–1,000 ms: Stress begins. The caller asks "Hello?", repeats, talks over the agent.

- Over 1,000 ms: Conversation falls apart. Interruptions pile up. The caller thinks the line is dead.

To hit sub-500ms, you need streaming architecture across all three layers — ASR, LLM, TTS. No system running REST calls with classic request-response will get there.

And most systems aren't even close. Hamming AI analyzed over 4 million live calls. The industry median: 1.4 to 1.7 seconds latency. Five times slower than the 300ms human expectation. The gap between demo video and reality.

The unsung hero: VAD (Voice Activity Detection). VAD uses pause detection to determine when your customer is done speaking — versus just taking a breath. Good systems distinguish "0.3 seconds = still thinking" from "0.9 seconds = done, now answer." Bad VAD either talks over the customer or leaves 2 seconds of silence. Both kill the conversation.

Bottom line: When evaluating a voice agent, the first question isn't "What can it do?" but "How fast does it respond — and how good is its VAD?" Without sub-800ms and clean turn-taking logic, kill the project. The strong providers (Retell AI, Vapi, Deepgram) lead with exactly these metrics — because they know latency is the hard currency.

E-Commerce Use Cases: Where Voice Works Today

Not all voice scenarios are equally relevant for e-commerce brands. Here are the three areas where ROI is clearest today:

1. Inbound: Order Status & Shipping Queries The number-one ticket in e-commerce. Around 40% of all support requests in D2C shops are "Where's my package?". A voice agent connected to your shipping provider, Shopify, and tracking system answers that in 15 seconds — 24/7, no hold time.

2. Inbound: Returns Processing Customer calls, gives order number, wants to return. The agent generates the return label, sends it via SMS or email, optionally asks for the return reason (valuable data). Done. If your shop handles 500+ returns per month, this scales immediately.

3. Outbound: Lead Qualification & Cart Recovery Customer adds $300 to cart and bounces. Your voice agent calls within 5 minutes, asks specifically about the reason (shipping costs? payment method? doubts?) and offers a discount code. Legally sensitive in some jurisdictions (consent requirements!), but strong in B2B contexts.

More high-potential e-commerce use cases:

- Appointment booking for consultation products (furniture, bikes, kitchens)

- Post-purchase feedback (NPS via call instead of email — significantly higher response rates)

- Address changes and payment disputes

- Callback services for out-of-stock products

- B2B reorder handling (wholesale, food service suppliers)

A concrete scenario: A European furniture retailer with 40,000 orders/year had a 22% abandonment rate in phone support — mostly due to long hold times. A voice agent for order status and delivery dates handled 68% of calls fully automatically. Abandonment rate dropped to 6%. Human agents handled only complex cases — and CSAT increased in parallel.

Rule of thumb: If your ticket volume exceeds 3,000 calls/month and at least 50% are standardizable, a voice agent pilot typically pays for itself within 6 months. Below that: build chat automation first (see our comparison of the 10 best AI agent tools 2026).

Industry Outlook: Healthcare, Logistics, Finance

Voice agents are more advanced in other industries than in e-commerce — especially where telephony is the dominant channel and compliance is already strict.

Healthcare: Appointment booking, prescription renewals, clinical triage. The leverage is massive: practices lose 20–30% of callers because no one picks up. A voice agent stops the bleeding. Mandatory here: HIPAA (US) or strict GDPR compliance in Europe.

Logistics: Shipment tracking, package rerouting, delivery windows. Voice often runs hybrid here: agent handles standard queries, escalates complex cases.

Finance: Fraud detection, billing questions, credit card blocking. The most demanding regulatory environment — SOC2, PCI-DSS, GDPR as minimum. Providers like Rasa or Kore.ai specialize here because they offer on-premise deployment.

What e-commerce can learn from this: Compliance isn't a nice-to-have. If your voice agent processes order data, addresses, or payment info, you need a GDPR-compliant provider with EU hosting. US-cloud-only is a legal risk for sensitive data. Specifically: Data Processing Agreement (DPA) per GDPR Article 28, transparency obligation per Article 13 (the customer must be informed at the start of the call that they're speaking with an AI), and consent requirements for any call recording.

Add the EU AI Act. In force since 2024, with staggered deadlines. Voice agents can fall under "high-risk AI" depending on the use case — especially when analyzing emotional states (affective computing!). Voice is also a biometric identifier. No recording may be used for model training without explicit consent. Providers that repurpose your call data for their own training are a deal-breaker.

For heavily regulated industries (finance, healthcare, public sector), on-premise hosting or at minimum dedicated EU instances is the only safe path. Providers like Rasa or Kore.ai offer this — US-SaaS-first players typically don't. The GDPR principles covered in our guide Is WhatsApp GDPR-compliant? apply equally to voice.

The learning curve from healthcare and finance is clear: the success factor isn't the voice — it's clean integration into CRM, ERP, and knowledge base, plus a compliance strategy that comes before the pilot project, not after.

Voice vs. Chat: When Each Channel Wins

Here's the honest take. At Chatarmin, we build both: the AI chat agent armincx for ticket automation and the AI phone assistant for voice support. Not because we want to cross-sell — but because the channels do different jobs.

When chat wins:

- Your customer prefers typing. 56% of Gen-Z and millennial buyers prefer text over voice — not because they're lazy, but because text allows multitasking.

- Outbound reach. WhatsApp has ~85% open rate. An outbound call has a 30% pick-up rate at best.

- Cost per resolved ticket. A chat agent uses only the LLM layer. A voice call consumes ASR, LLM, and TTS compute in parallel.

- Documentation. Chat is written, voice isn't. For traceability and disputes, chat wins.

When voice wins:

- Phone is the dominant channel for your audience — older demographics, healthcare, complex advisory products.

- Complex consultations with back-and-forth — voice is 3–5× faster than chat ping-pong.

- Premium support & escalations — a phone call signals urgency that a ticket can't convey.

- Outbound flows where a call is expected — e.g., callbacks after complaints or appointment confirmations.

The most likely end state for e-commerce: hybrid. WhatsApp and chat cover 70–80% of volume. Voice handles escalations, premium segments, and specific outbound cases. Most brands should start with chat automation and add voice strategically once the business case is solid.

Our take: If you deploy voice before getting your chat tickets under control, you're building two half-automated channels instead of one functioning journey. Voice scales the problems you haven't solved in chat yet.

Leading Platforms: Technology Comparison

Before we get into providers: no pricing here. The market is too volatile, and honest assessment only works through technology. On top of that, every platform offers different terms depending on volume.

| Platform | Strength | Best For |

|---|---|---|

| Retell AI | Extremely low latency, developer-first, real-time orchestration | Teams with their own engineering power |

| Vapi | Scalable infrastructure for millions of calls, omnichannel | Enterprise with high call volume |

| ElevenLabs | Market leader in natural, emotional AI voices (TTS) | When voice quality is a brand factor |

| Deepgram | Most accurate speech recognition even in call center noise | Noisy environments, strong accent requirements |

| Rasa / Kore.ai | Enterprise compliance, on-premise possible, deep integrations | Banks, insurance, healthcare, public sector |

Core decision: Do you want an end-to-end platform (Vapi, Retell) or are you combining best-of-breed components (ElevenLabs for TTS + Deepgram for ASR + your own LLM)? Option 1 ships faster. Option 2 gives more control.

For European companies: check hosting region, sub-processor lists, and GDPR DPA. Some US providers now offer EU hosting, others don't. This is a deal-breaker, not a negotiation point.

What you won't find at these tools: Deep e-commerce integration into Shopify, Klaviyo, Gorgias. For that, you either need your own middleware or a specialized platform that ships with these connectors. Most voice providers are (still) industry-agnostic.

Frequently Asked Questions (FAQ) About AI Voice Agents

What is an AI Voice Agent?

An AI Voice Agent is an artificial intelligence that conducts natural phone conversations with humans, understands their concerns, and autonomously resolves tasks such as appointment bookings or support requests.

What is the latency of modern voice agents?

The latency of modern AI Voice Agents ideally sits between 300 and 500 milliseconds. Response times below this threshold are perceived by humans as a fluid, natural conversation.

Can AI Voice Agents replace human call center agents?

No, they don't replace humans but take over repetitive first-level support. Complex or highly emotional cases are still seamlessly escalated to human agents.

How much does an AI Voice Agent cost?

Costs typically consist of API calls for speech recognition, the AI model, and speech synthesis. In B2B environments, pricing is usually per conversation minute or per successfully resolved ticket.

Are AI Voice Agents GDPR-compliant in Europe?

Yes, provided the provider offers local EU hosting, doesn't repurpose sensitive biometric voice data for AI training, and has a Data Processing Agreement (DPA) in place.

What is the difference between a chatbot and a voice agent?

While a chatbot is limited to text-based input and output, a voice agent communicates via spoken language in real time and must handle acoustic challenges like accents, noise, and interruptions.

What does "barge-in" mean for AI voice assistants?

Barge-in is the technical capability of a voice agent to immediately stop speaking and start listening when the human caller talks over the AI mid-sentence.

What technologies power Voice AI?

A classic AI Voice Agent combines Automatic Speech Recognition (ASR) for listening, a Large Language Model (LLM) for thinking, and Text-to-Speech (TTS) for speaking.

Can AI phone assistants detect emotions?

Yes, state-of-the-art systems use affective computing to detect frustration based on vocal tone, speaking pace, and word choice, and adjust their own tone empathetically in response.

Which industries benefit most from Voice AI?

Industries with high, standardized call volumes such as e-commerce (order status), healthcare (appointment booking), and logistics benefit from the most immediate ROI.

Conclusion: What You Should Do With AI Voice Agents in 2026

Voice agents are no longer future technology. They're in production, solving real problems, and scaling when the setup is right. But they're not plug-and-play.

My honest recommendation for e-commerce brands:

- Under 2,000 calls/month: No dedicated voice agent. Build chat automation first — see how armincx automates customer service.

- 2,000–10,000 calls/month: A pilot is worth it. One clearly scoped use case (e.g., order status), 3 months testing, measure abandonment rate.

- Over 10,000 calls/month: Voice belongs on your roadmap. Demand latency under 800ms, clarify compliance, roll out incrementally.

The biggest mistake I keep seeing: Teams deploy voice agents without first building a clean knowledge base and CRM integration. Result: the agent sounds great but delivers wrong answers. That's worse than no agent at all. Garbage in, garbage out — but with a natural voice, which makes it even worse.

The most important truth: Voice is a channel, not a strategy. The brands winning in 2026 think in customer journeys — not touchpoints. WhatsApp for newsletters and outbound, chat for standard queries, voice for escalations and premium support. The channel is the means, not the goal.

Brands that understand this playbook save 40–60% on support costs according to McKinsey — without sacrificing CSAT. The rest stands on the sideline talking about hype.

Want to see what AI-powered customer service looks like in practice — across chat AND voice?

👉 Book a free demo — and see how armincx resolves tickets automatically while the AI phone assistant handles your 2nd-level queue.