Every e-commerce brand we talk to has a chatbot. And every second one wonders why it doesn't move the needle. Most chatbots answer questions. AI agents take action. Sounds like a semantic detail. It's actually the reason one drives customers away and the other closes 56 % of your tickets. If in 2026 your thinking on ai agents vs chatbots is still stuck in 2022, you're burning budget on an FAQ tool with a language-model paint job.

AI Agents vs. Chatbots: The Difference in Two Sentences

A chatbot talks. An AI agent acts.

Chatbots react to input and return an answer. They're reactive, follow predefined dialog paths or an LLM prompt, and lose their memory after every session. AI agents decide what to do next, access other systems, and execute actions — without a human stepping in to follow up. They pursue a goal, plan sub-steps, use tools like CRM, ERP, or shipping providers, and learn from past interactions.

If your AI system only speaks, it's a chatbot. If it changes something — cancels an order, issues a discount code, closes a ticket in Zendesk — it's an agent.

What Is a Chatbot? The "Read-Only" Solution

A chatbot is a conversational interface. Nothing more. Either rule-based (decision trees, keyword matching) or LLM-driven (free-form answers based on a prompt and usually a connected knowledge base).

What chatbots are good at:

- FAQ management: "How long does shipping take?" → Answer from the knowledge base.

- Basic support: Return policies, opening hours, contact info.

- Simple routing: User asks about returns → Handoff to support team.

Where they fail:

- Multi-step processes (check order → contact carrier → issue voucher).

- Context from other systems (CRM, shop, ERP, shipping provider).

- Follow-up actions where something needs to happen — not just be said.

On top of that, there's the Chatbot Failure Tax: Customers repeat themselves, click through menus, and still end up with the support team anyway. According to a Forrester survey, 30 % of customers actively look for an alternative brand after a frustrating chatbot experience. That's not a service-level problem. That's a retention problem.

What Is an AI Agent? The "Read-Write-Act" Solution

An AI agent isn't a chatbot variant. It's a different software category. It has — figuratively speaking — arms and legs, because it can write into systems and trigger actions there through API integrations.

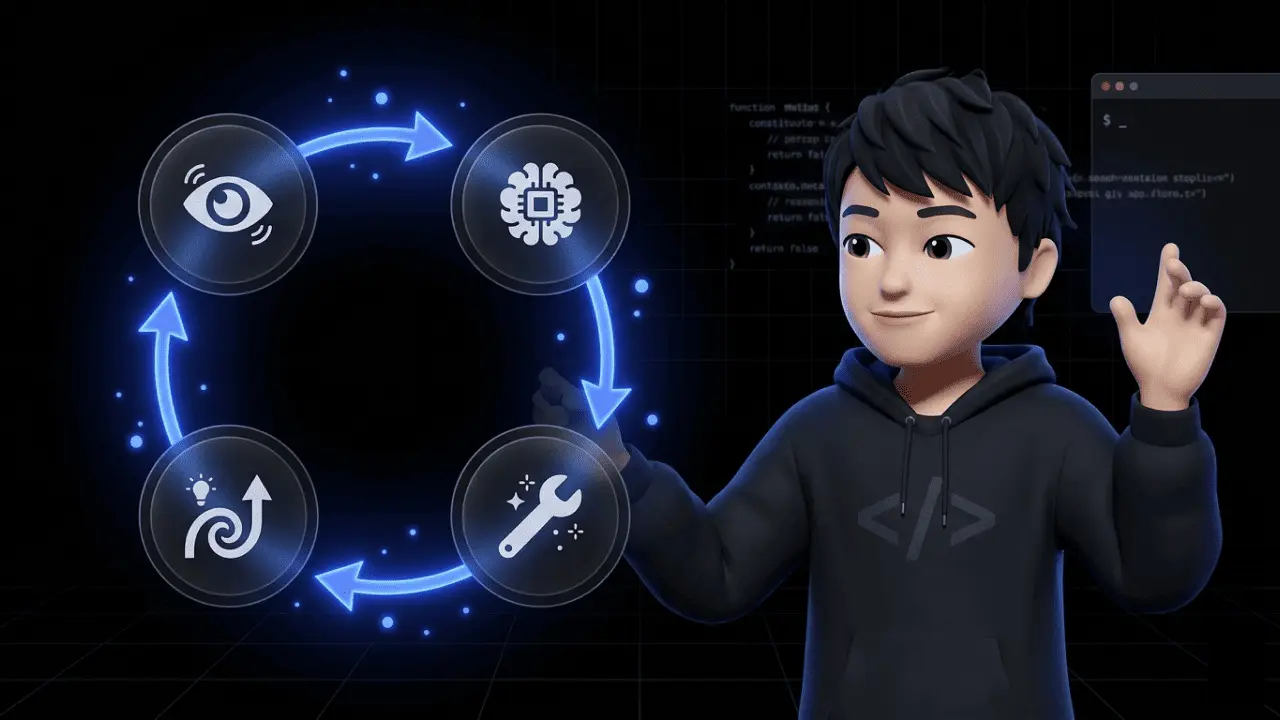

AI agents follow the PRAL cycle: Perception (understand context), Reasoning (make a decision), Action (use a tool), Learning (learn from outcomes). If you want to go deeper into the technical architecture, we've broken down how AI agents work separately.

What an agent can actually do:

- Break down goals: "Check order status and inform the customer" becomes 4 sub-steps.

- Reconcile context: CRM history, order data, shipping status in one pass.

- Act across systems: write to CRM, cancel invoices, trigger package tracking, generate vouchers.

- Use long-term memory: A returning customer is recognized, preferences are applied.

Important: An AI agent isn't a chatbot with more horsepower. It doesn't just replace the frontend — it replaces the caseworker workflow behind it.

The Direct Comparison: 8 Differences That Matter for Your Business

| Attribute | Chatbot | AI Agent |

|---|---|---|

| Core principle | Reacts to prompts | Pursues goals, acts proactively |

| How it works | Rule-based or LLM conversation | PRAL cycle + tool use |

| Task scope | Linear, single-step flows | Multi-step, dynamic workflows |

| System access | Interface / API to knowledge base | Read + write on CRM, ERP, shop, shipping |

| Decision-making | None — follows script or prompt | Yes — decides based on context |

| Memory | Per session, then gone | Long-term memory across sessions |

| Typical outcome | "Here's your tracking link" | "I've tracked your order and issued a 10 % voucher" |

| Maintenance | Maintain flows, update FAQ | Integrate tools, define guardrails |

A Real-World Example: What Happens With a Late Delivery?

This is the case where you see the difference between a chatbot and an AI agent in ten seconds.

Chatbot scenario: Customer writes: "Where's my order?" The chatbot replies with a tracking link. The customer clicks, sees the delay, gets frustrated. She asks for compensation. The chatbot replies: "Our support team will get back to you." Customer waits 48 hours for a response. Ticket open. Frustration up. NPS down.

AI agent scenario: Customer writes: "Where's my order?" The agent checks the shipping status via the carrier integration, detects a 2-day delay, checks her customer history in Shopify (repeat customer, 4 orders), proactively issues a 10 % voucher, informs her, and closes the ticket in the CRM. No human touch. 18 seconds.

Same customer, same input. Two completely different outcomes.

Sales works the same way: a lead comes in, the agent detects intent, enriches the profile with CRM data, routes to the right AE, and triggers a personalized follow-up sequence. No sales rep clicking through six tabs. If you want to understand the boundary to the next category up: we've broken down the difference between Agentic AI and AI Agents in a separate piece.

When Is a Chatbot Enough — And When Do You Need an AI Agent?

Not every e-commerce company needs an agent. A chatbot is fine when:

- Your ticket volume is low (under ~500 tickets per month).

- 80 % of inquiries are pure information queries (opening hours, shipping costs, return policy).

- You have no complex system integrations.

- Your team is small enough that support handles every exception manually.

You need an AI agent when:

- Workflows are cross-system (shop + CRM + shipping + payment).

- Decisions and judgment are required (voucher yes/no? refund amount?).

- You want to replace human copy-paste work that slows your team down.

- You need personalization at scale (1,000+ interactions per day).

The rule of thumb: If you or your team have to do something after every customer interaction, you need an agent, not a chatbot.

How Do You Measure Whether an AI Agent Works?

The most common mistake: AI agents get measured like chatbots — by conversation minutes or session counts. Wrong. An agent gets measured by whether it closes tickets, not whether it replies.

The four KPIs that actually matter:

| KPI | What it measures | Realistic target |

|---|---|---|

| Automation Rate | Share of tickets the agent closes without a human | 40–60 % (standard support), up to 70 % in mature setups |

| First Contact Resolution (FCR) | Tickets solved in the first interaction | > 70 % |

| CSAT after agent interaction | Customer satisfaction after automated contact | ≥ your support team's CSAT (!) |

| Cost per Ticket | Full cost per ticket including tool and maintenance cost | 60–80 % below manual cost per ticket |

The underrated fifth KPI: Escalation Quality. When the agent escalates, does the ticket arrive at support with full context — or does the human have to start from scratch? If the latter, you don't have an agent. You have a more expensive chatbot.

If your CSAT after agent interaction sits below your team's CSAT, you have a configuration problem — not a technology problem. The agent needs better guardrails, not more tickets.

GDPR: What You Need to Know for European E-Commerce

In German and Austrian e-commerce conversations, the GDPR question is always the second or third objection — and it's a valid one. Both chatbots and AI agents process personal data the moment they talk to real customers. The difference: AI agents typically process more data from more systems.

The four points you need to have locked down:

- Data Processing Agreement (DPA) with your vendor. Non-negotiable. If your agent provider doesn't offer a DPA, the conversation ends there.

- Data processing location. EU hosting is the clean answer. With US-based LLMs (OpenAI, Anthropic via US), you additionally need Standard Contractual Clauses (SCCs) and a legal basis — which, in doubt, means explicit consent, not "legitimate interest."

- Scope of customer data. What data does the agent see? Only the current conversation? Order history? The full CRM profile? The broader the scope, the higher the requirements for data minimization and purpose limitation.

- Training data. Are customer chats used for model training? In enterprise setups, never. In consumer LLMs, often. This needs to be excluded contractually.

Practical advice: If your vendor answers vaguely when asked "Where is the data processed?", find another vendor. In 2026, this isn't something you negotiate. It's a baseline requirement.

Chatarmin hosts in the EU, includes DPAs in standard onboarding, and doesn't train models on customer data. If that's your most important criterion, let's walk through the data flow directly in the demo.

The Market Reality in 2026: Between Hype and Wishful Thinking

According to Gartner, by 2028 at least 15 % of daily work decisions will be made autonomously by AI agents — up from effectively 0 % in 2024. In addition, 33 % of all enterprise software applications will include agentic AI capabilities by 2028.

That's the optimistic side. Now the other: Over 40 % of all agentic AI projects will be canceled by the end of 2027 — according to Gartner, due to escalating costs, unclear business value, and inadequate risk controls. Meaning: anyone being sold an agent as "Chatbot 2.0" right now is running straight into that statistic.

The lesson is simple: AI agents are not an end in themselves. They work when the use case is clear, the integrations are stable, and there are real guardrails. They fail when they're bought as hype-check-the-box. At Chatarmin, we only deploy agents where they solve a measurable problem. No agent that exists just so you can show it off on your pitch deck.

Bottom Line: AI Agents Aren't Chatbots on Steroids

When you ask yourself ai agents vs chatbots in your next team meeting, the real question isn't "Which tool do we buy?" The question is: Do you want a system that answers questions — or one that gets work done?

Chatbots are fine for FAQ and low-stakes routing. AI agents are what you need when customer service no longer scales, when your support team is drowning in repetitive small tasks, or when you want to resolve tickets that sit open for 48 hours today in 18 seconds.

We run AI agents that close 56 % of our customers' tickets without human intervention — directly via WhatsApp, integrated into Shopify, Klaviyo, and your CRM. If you want to know what that looks like for your setup, book a demo. 30 minutes, no pitch-deck battle. We'll look at your tickets together and tell you honestly whether an agent solves your problem — or whether a better chatbot is enough.

FAQ: AI Agents vs. Chatbots

Is an AI agent just a better chatbot?

No. A chatbot communicates, an AI agent executes actions in connected systems — the difference is categorical, not gradual.

Can I upgrade my existing chatbot to an AI agent?

No. The architecture is fundamentally different; the realistic path is to keep the chatbot as a frontend and layer an agent with tool integrations on top.

Do AI agents even make sense for small shops?

No. Below roughly 500 tickets per month, the setup effort rarely pays off — a well-maintained chatbot plus FAQ page is the better economics.

Do AI agents replace human support?

No. They take over the repetitive 50–70 % of inquiries so your team can focus on cases that need judgment and empathy.

Can AI agents and chatbots be used GDPR-compliant?

Yes. Both systems can be operated GDPR-compliant when data processing is transparently documented, the legal basis is clean, and a Data Processing Agreement with the vendor is in place.

Do AI agents also work on WhatsApp?

Yes. AI agents can be integrated natively into WhatsApp and can check orders, trigger returns, or handle support tickets through the Business API.

How long does it take to set up an AI agent in e-commerce?

Yes, faster than most people think: for a support agent with standard integrations (Shopify, Zendesk, WhatsApp), 4–8 weeks is realistic — assuming the system landscape is clean and the knowledge base is in place.

Can an AI agent hallucinate and give false information?

Yes. The risk exists with any LLM-based system — it's significantly reduced through guardrails (fixed tool responses, escalation triggers, whitelists) and human review during the launch phase.

Do I need a developer to run an AI agent?

No. Modern agent platforms are no-code/low-code — you only need a developer if you want to connect proprietary, non-standard systems.

What does an AI agent cost compared to a chatbot?

Yes, an AI agent is significantly more expensive upfront than a classic chatbot — but the cost per ticket is usually 60–80 % below the manual support path due to the higher automation rate, which amortizes the setup effort within a few months.