A chatbot tells you where your package is. An AI customer service agent cancels the order, generates the return label, and updates the address in your ERP — without a human clicking "Next."

That's the difference. And in 2026, it decides whether your DACH e-commerce team still scales — or drowns in tickets.

I see it every day: shops stuck in Outlook chaos, running static macros in Gorgias, or rolling back legacy bots that hallucinated one time too many. Classic chatbots aren't the solution. They're part of the problem.

Here's the update. No buzzword bingo.

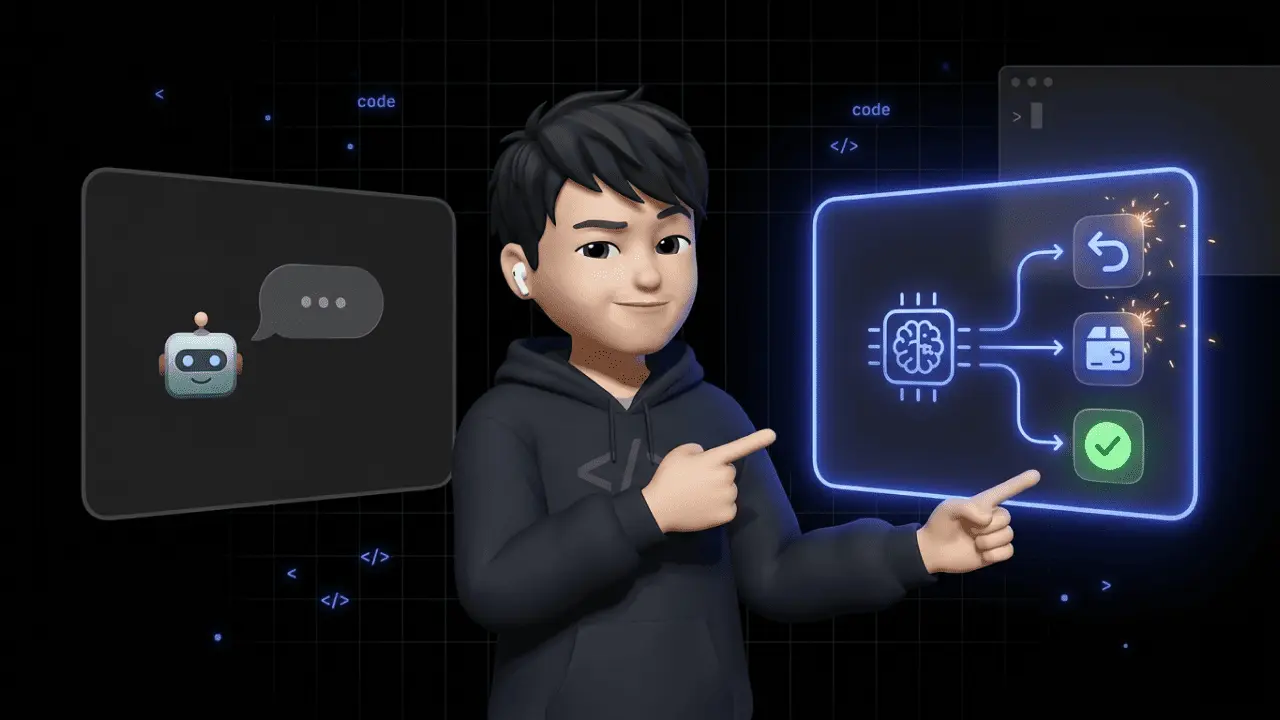

What AI Customer Service Agents Actually Are

Short version: Not a bot that picks between A and B. A system that understands, plans, and acts.

Three capabilities make the difference:

- Reasoning & Planning: The agent doesn't just spot "word X appears" — it understands what the customer actually wants. Example: "My package has been out for 5 days, can I still cancel?" That's not a tracking question. It's a cancellation in the shipping process. The agent gets that.

- Tool Use: The agent executes real actions via APIs. Creating a return label in Shopify, updating an address in JTL, issuing a voucher in Xentral. Not just firing off a mail template.

- Orchestration: Multi-step sequences. Find order → check address → pull shipping status → respond → close ticket. Fully autonomous.

The industry splits this into five autonomy levels: from Level 1 (fixed script bot) to Level 5 (multi-agent systems that coordinate among themselves). E-commerce supports land realistically at Levels 3–4 — autonomous handling of standard requests, humans take over on escalation.

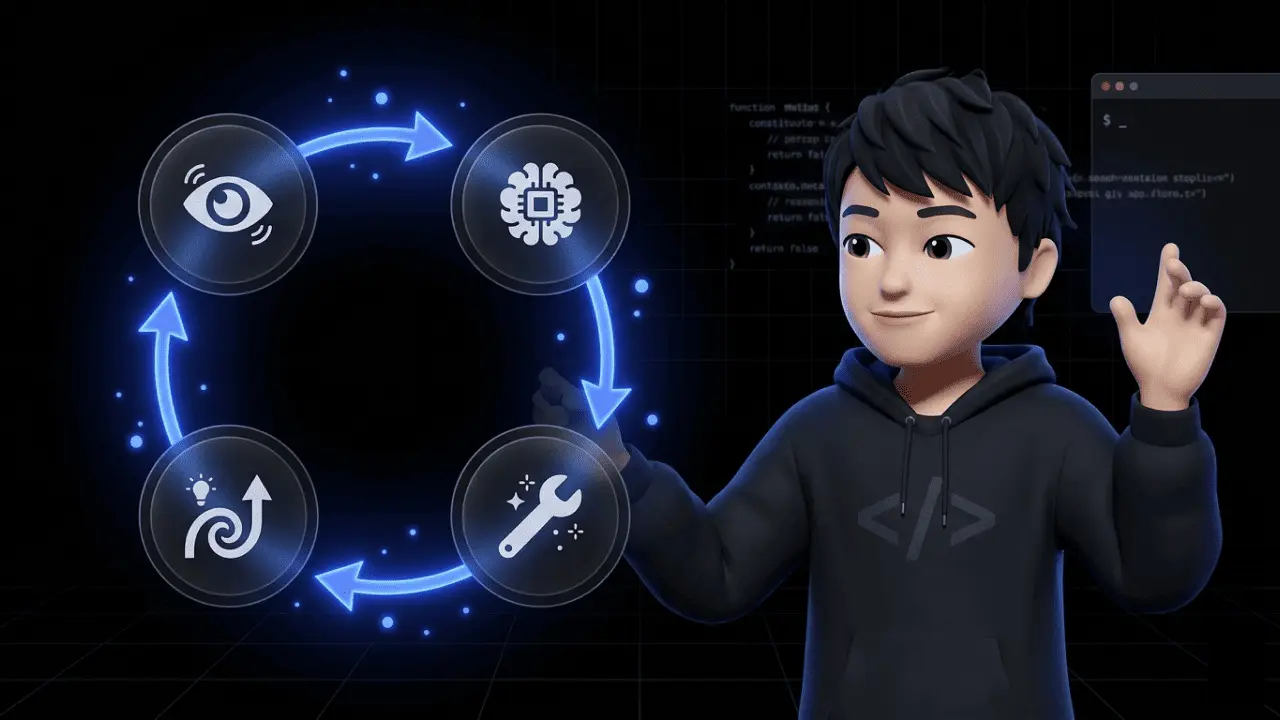

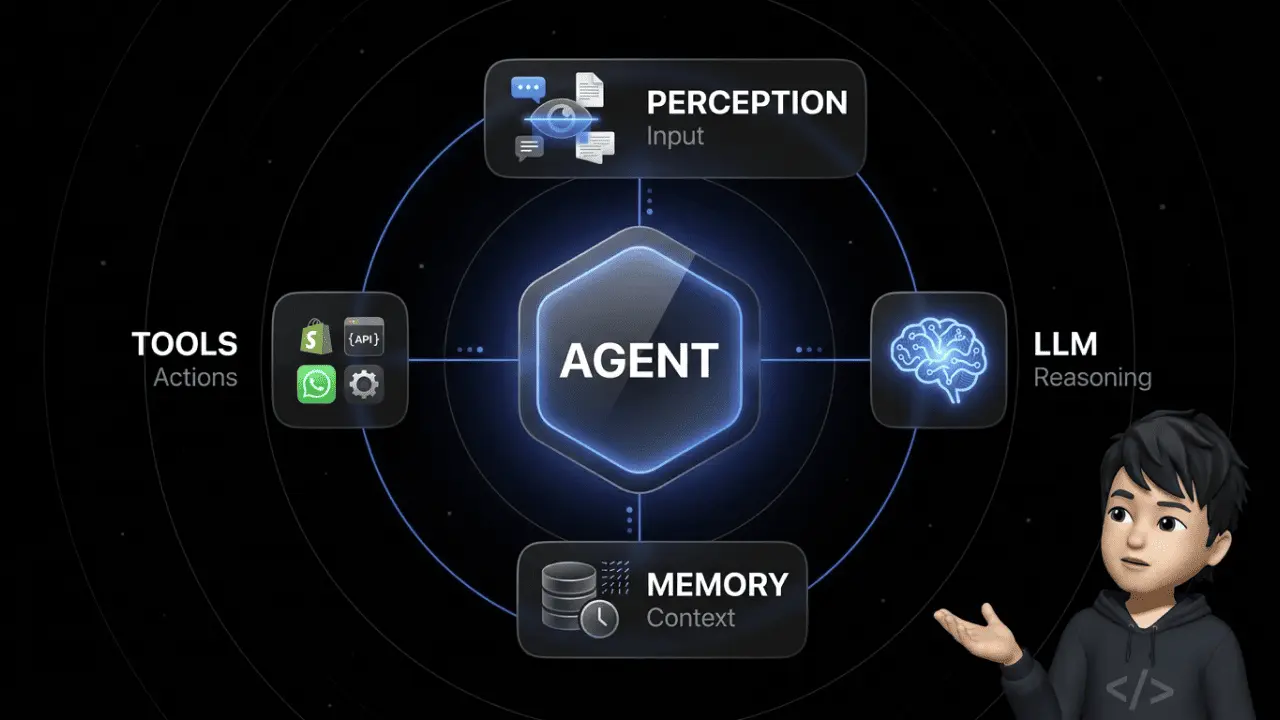

Deeper dive into the architecture: How do AI agents work?

The Five Autonomy Levels: Where Does Your Setup Land?

This breakdown isn't marketing fluff. It helps you evaluate what your system actually delivers.

| Level | What happens | E-commerce example |

|---|---|---|

| Level 1 – Scripted | Fixed if-then rules, no language understanding | FAQ bot: "Press 1 for shipping, 2 for returns." |

| Level 2 – Reactive | NLP detects intents, responds with templates | Mail autoreply with dynamic name and tracking number |

| Level 3 – Executing | LLM + RAG, executes single actions in systems | Agent creates return label in Shopify after confirmation |

| Level 4 – Orchestrating | Multi-step, plans autonomously, pulls data across systems | Partial cancellation: check order → inventory API → refund → customer email |

| Level 5 – Collaborative | Multiple specialized agents coordinate on the same case | Returns agent + compliance agent + fraud agent on one case |

Where do DACH shops realistically land? Most teams sit on Level 1–2 today. Anyone running a modern tool properly reaches Level 3–4. Level 5 is enterprise territory — irrelevant for 95% of e-commerce brands.

Why this matters: Many vendors sell themselves as "AI agents" but functionally deliver Level 2. Ask concretely: "Can the system trigger a refund?" If the answer is "with manual approval by an employee," it's Level 2 with better PR.

Why an LLM Alone Isn't Enough

Plug ChatGPT into your support and hope for the best? Strong recommendation: don't.

Standard LLMs have two problems. They hallucinate. And they don't know your company.

Concretely: an agent running only on an open LLM makes up shipping terms. Invents return windows. Names products you never carried. One screenshot on Trustpilot — and your customer experience is done.

The answer is RAG (Retrieval-Augmented Generation). Before every response, the agent pulls verified data from real sources: knowledge base, ticket history, product FAQs, shop system. The LLM formulates the answer strictly based on that data. No free association. No hallucination.

Real example: Customer asks about warranty terms for product X. Without RAG: LLM guesses. With RAG: agent pulls product X from Shopify, checks your terms, responds fact-based.

Running AI customer service agents without RAG means building risk. Not automation.

The Human Handoff: Where 90% of Setups Die

The Nextiva CX Report 2025 is clear: 98% of service leaders consider smooth AI-to-human handoffs essential. 90% admit they can't make them work.

Why? Because most tools treat the handoff as an emergency exit. The moment the AI gives up, the chat is thrown at the next available agent. Who starts from zero. The customer explains their problem for the third time. That's the exact moment AI customer service destroys your CSAT instead of lifting it.

A clean handoff has three clear triggers:

- Confidence score below 60–70%: The agent recognizes it would be guessing. Hands off before doing damage.

- Negative sentiment: Word patterns like "this is outrageous," "my lawyer," "third email." Escalate immediately.

- Complexity above threshold: Partial cancellations, multi-product orders, legal matters. Human takes over.

Equally critical: Context preservation. The human agent inherits a structured summary: What has the customer explained? What actions did the AI attempt? What's the sentiment? Which order data is already loaded?

Without that handoff, any AI deployment is smoke and mirrors. With a clean handoff, your human agent is faster than without AI — because the groundwork is already done.

GDPR, EU AI Act, SOC 2: Not a Nice-to-Have

Short and serious: AI agents process personal data. Names, addresses, order histories; for supplements brands often health data; for finance brands payment information.

If you operate in the EU, you're dealing with three frameworks: GDPR, EU AI Act, and for larger B2B customers SOC 2.

Three best practices that aren't negotiable:

- Data Minimization: The agent only sees what it needs for the specific task. Not "everything in the CRM."

- PII Masking: Sensitive data gets redacted before model access. The model sees placeholders, not raw data.

- Deletion routines: Chat logs auto-delete after defined retention periods. No "sometime later."

The point often overlooked in DACH: Where does the model run? If your provider uses OpenAI APIs in US regions, you've got a data transfer to the US — with all the GDPR implications.

EU hosting, signed DPA, ISO certification. That's your contract language with B2B customers. Not a minor detail.

The Real ROI: Numbers That Hold Up in DACH E-Commerce

Enough theory. What's in it for you?

| KPI | Realistic 2026 benchmark | Source |

|---|---|---|

| Fully automated tickets | 70–80% after 6-month ramp-up | ArminCX benchmarks |

| Service operations cost reduction | 30% via agentic AI | Gartner forecast 2029 |

| Resolution time (Retail AI) | From hours to minutes | Freshworks / Freddy AI |

| Capacity for premium cases | +2–3 hours per agent per day | Chatarmin customer data |

These aren't marketing numbers. They're the current status of brands that rolled out AI customer service agents cleanly over the past 12 months. The 30% figure isn't a Chatarmin invention either — it's the current Gartner forecast for the market through 2029.

What happens to your team? The "AI replaces everyone" panic is nonsense. What actually happens: the support agent shifts from ticket handler to orchestrator. They monitor the AI, step in on escalations, build workflows. The job gets more interesting, not obsolete.

The trade-off nobody mentions: 70% automation only works if your processes are properly documented. Teams that handle special cases by gut feeling can't automate them. AI customer service agents force you into order. That's a feature — but it stings for a minute.

Which Support Tickets Can Actually Be Automated?

Honest answer: not all of them. But more than you think.

| Ticket type | Share of volume | Automatable? | Typical setup |

|---|---|---|---|

| WISMO ("Where is my order?") | 30–50% | Yes, 95%+ | Shop API + carrier API → automatic status check |

| Return requests | 10–20% | Yes, 80–90% | Rule check (return window) → generate label → email |

| Address change | 5–10% | Yes, 90%+ | Only if not yet shipped → update in shop + ERP |

| Cancellation / order changes | 5–15% | Yes, 70% | Check inventory → trigger refund → confirm to customer |

| Voucher requests | 3–8% | Partially, 60% | Goodwill cases: human decides, AI preps |

| Product consultation | 5–15% | Partially, 40–60% | RAG with product PDFs; escalate on complexity |

| Complaints / defects | 3–10% | Rather not | Emotional, liability-relevant — human required |

| Invoice inquiries | 2–5% | Yes, 80% | Accounting system + automatic PDF delivery |

The rule: Anything based on structured data and clear decision logic can be automated. Anything requiring empathy or liability judgment belongs to humans.

The 70–80% automation rate you see in demos isn't magic AI. It comes from the fact that WISMO + returns + address changes often already make up 60% of your ticket volume.

Market Overview 2026: Who Plays Where?

The market has sorted itself out since 2024. Two camps:

AI-first agent platforms (global):

- Sierra, Decagon, Cognigy, Kore.ai: Enterprise focus, deep workflow engine, mostly US/UK-first.

- Intercom / Fin: Fast setup, solid for digital products. Weak on DACH ERP integrations.

- Zendesk AI, Gorgias: Strong in classic ticketing. Pricing models explode in Q4 (Gorgias charges per ticket).

DACH-specific e-commerce tools:

- ArminCX (by Chatarmin): AI-first architecture. Native integrations with Shopify, JTL, Xentral, Shopware, Billbee. Omnichannel inbox (email, WhatsApp, Instagram, Facebook, live chat). German hosting, German-speaking customer success.

What actually matters in a tool comparison — and what's not in the sales decks:

- Can the AI execute real actions or only produce text?

- How deep is the integration with your ERP/WMS?

- How is the handoff to humans handled?

- EU or US hosting?

- Who trains the AI — you or the provider?

Anyone picking a US tool for DACH e-commerce in 2026 because it "sounds hip" pays twice. Once for the license. Once for onboarding. And a third time because JTL and Billbee never integrate cleanly.

The Rollout: 4 Steps to Production

Most teams don't fail on the tool. They fail on the rollout process. Here's what clean looks like:

1. Process Audit & Ticket Classification (Week 1–2) Pull the last three months of tickets from your system. Classify by type (WISMO, returns, cancellations, etc.) and identify your top 5 categories. Those are your pilot candidates.

2. Prepare the Knowledge Base (Week 2–4) Your AI is only as good as its data sources. Clean up your help center. Document FAQ answers. Upload product datasheets. Keep shipping and return policies current. That's the homework nobody gets around.

3. Pilot on One Ticket Type (Week 4–8) Start with a clearly scoped use case — usually WISMO. One ticket type, one team, clear success metrics: automation rate, CSAT, escalation rate. Anyone trying to automate "everything at once" fails.

4. Rollout with Monitoring (Week 8+) One new ticket type per sprint. Weekly reviews of confidence scores, hallucination rate, and handoff reasons. Iterate workflows. After six months: 70–80% automation rate — if you stay consistent.

What can go wrong:

- Pilot zone too big: "Let's automate everything right away" → nothing works properly.

- Bad data: Contradictory FAQ articles → the AI learns nonsense.

- No monitoring: No one watches the KPIs → nobody notices the agent hallucinating.

The biggest mistake, though, is this: teams expect Month-1 results. Realistically, the first 40–60% automation lands after 8–12 weeks, the 70–80% after six months. Anyone who can't accept that pulls the ground out from under themselves and the tool.

Frequently Asked Questions About AI Customer Service Agents

Are AI customer service agents better than classic chatbots?

Yes. AI customer service agents execute real actions in shop, ERP, and CRM via APIs — classic chatbots only react to predefined scripts.

Do AI customer service agents always need a human handoff?

Yes. For complex, emotional, or legal cases, human handoff is mandatory — without a clean handoff, CSAT drops sharply.

Are AI customer service agents GDPR-compliant?

Yes, but only with EU hosting, a signed DPA, and active PII masking. Without these building blocks, deployment is a compliance risk.

Will AI customer service agents replace my support team?

No. They take over 70–80% of standard requests — the team then focuses on complex cases and strategic work.

Do AI customer service agents work with Shopify, JTL, and Xentral?

Yes, but only with DACH-specific platforms. US tools regularly fail at deep ERP integration in the German-speaking e-commerce ecosystem.

Do AI customer service agents hallucinate?

Yes, but only without RAG. With Retrieval-Augmented Generation, the agent only accesses verified company data and stops making up answers.

Can AI customer service agents work multilingually?

Yes. Modern LLM-based agents understand and respond in 50+ languages — the key is having your knowledge base available in the target languages.

Is AI customer service quick to deploy?

No. First automation wins land in 4–8 weeks — but 70–80% automation rates realistically take six months.

Can AI customer service agents handle returns autonomously?

Yes. Via APIs, they generate return labels, check return windows, and trigger refunds — fully without manual intervention.

Are AI customer service agents the same as agentic AI?

No. AI customer service agents are a specific use case; agentic AI describes the broader paradigm of autonomous multi-agent systems.

Conclusion: Rethink Customer Service — Or Get Replaced

I used to be the one handling tickets manually. That works at 30 tickets a day. At 300, it's torture. At 3,000, you'd be better off never having started a business.

AI customer service agents aren't a tech gimmick. They're the only way a DACH e-commerce team still scales cleanly in 2026 — without tripling headcount.

But — and this is the important part: the technology alone gets you nowhere. Anyone deploying an LLM chatbot without RAG, without clean handoffs, without GDPR basics is just building a new problem. Anyone doing it right has a team six months from now that doesn't answer "Where is my package?" anymore — and works strategically instead.

Want to see how this looks for Shopify, JTL, Xentral & co. inside Chatarmin? Book a demo. 30 minutes, system live, your use case on your data. No slideshow.