Your chatbot answers FAQs. Your CRM stores contacts. Your ticketing system sorts requests into folders. Three tools, zero initiative. Autonomous AI agents change that fundamentally – they complete tasks instead of just spitting out information. And that difference is what separates the e-commerce teams that scale in 2026 from the ones stuck in manual workflows.

The market for autonomous AI agents is projected to grow from $7.76 billion (2025) to nearly $317 billion by 2035 – a compound annual growth rate of 45%. This isn't a hype cycle. This is an infrastructure shift.

And the results are already measurable: According to the Google Cloud Trend Report 2026, telecom company Telus saves 40 minutes per customer interaction using AI agents. Brazilian pulp manufacturer Suzano cut database query processing time by 95%. These aren't lab numbers – these are production figures.

In this article, you'll get a clear definition, the technical architecture behind it, and concrete use cases for e-commerce businesses. No buzzword bingo – just substance.

What Are Autonomous AI Agents?

Autonomous AI agents are AI systems that independently perceive their environment, analyze goals, create plans, and execute actions – without a human approving every step. They continuously learn from feedback and adapt their behavior, instead of just waiting for prompts. The key difference from chatbots: agents act, chatbots respond.

From the Chat Paradigm to the Do Paradigm: Why This Matters Now

Since late 2022, everyone knows generative AI. ChatGPT, Claude, Gemini – tools that wait for a prompt and return text. That's the chat paradigm: you ask, the AI answers.

The do paradigm works differently. An autonomous agent receives a goal – say, "Process all return requests with an order value under $50" – and handles it independently. It checks the order, matches return policies, creates the shipping label, and notifies the customer. No human clicks in between.

This is the biggest architecture shift since the graphical user interface. Not because models got smarter (they did), but because they can now operate tools. An LLM that triggers an API isn't a chatbot anymore. It's an employee with system access.

For e-commerce teams, that means processes that used to require three tools and a manual handoff now run in a single loop. And by the end of 2026, an estimated 40% of all enterprise applications will have integrated agentic AI.

The Architecture Behind Autonomous AI Agents

For an agent to work autonomously, it needs four core components. Not rocket science – but important to understand before you deploy one.

| Component | Function | E-Commerce Example |

|---|---|---|

| Perception | Perceive environment (data, events, messages) | Detect incoming message with return photos |

| Reasoning | Analyze situation, understand context | Check order history, calculate return window |

| Planning | Plan action steps | Approve return → create label → notify customer |

| Action | Execute actions | API call to ERP, send message via WhatsApp |

The Brain: LLMs as the Reasoning Engine

The Large Language Model does the thinking. It interprets the request, weighs options, and decides which tools to use. Important: the LLM is not the agent – it's a component of the agent. A common misconception.

Orchestration: How Agents Plan Their Tasks

Not every agent plans the same way. Two orchestration patterns dominate in 2026 – and which one you choose directly impacts cost and quality.

ReAct (Reason + Act): The agent observes, thinks, acts – and repeats this cycle iteratively. It can adjust its plan at each iteration. Ideal for dynamic tasks where context changes during execution. The downside: high token consumption, because the LLM has to reason at every step. When your agent handles an open customer complaint where the real issue only surfaces mid-conversation – ReAct.

Plan-and-Execute: The agent creates a complete step-by-step plan upfront and then works through it sequentially. Fewer reasoning calls, fewer tokens, lower costs. For structured processes like returns or order tracking, this is the far more efficient choice. When the workflow is predictable – Plan-and-Execute.

| Pattern | Strength | Weakness | Best Use Case |

|---|---|---|---|

| ReAct | Flexible, adaptive | High token consumption | Open inquiries, troubleshooting |

| Plan-and-Execute | Cost-efficient, fast | Less flexible with surprises | Returns, order tracking, KYC checks |

In practice, many systems combine both: Plan-and-Execute for the standard path, ReAct as a fallback when the plan breaks.

Memory: Why Agents Need to Remember

An agent without memory forgets everything after each interaction. That's why modern architectures use three memory types:

- Short-term Memory: Context of the current session. The agent knows the customer is currently discussing order #4821.

- Episodic Memory: Logging of all decisions. Critical for regulatory audits – especially under GDPR, this isn't a nice-to-have, it's mandatory.

- Long-term Memory: Historical patterns in vector databases. The agent learns that customers from a specific region tend to prefer certain shipping carriers over others.

Tools & Integrations

Agents only become autonomous through tools. They call APIs, query databases, control external systems. Two protocols are driving this in 2026:

MCP (Model Context Protocol): Connects agents to tools in a standardized way – from Shopify to Zendesk to your own ERP.

A2A (Agent2Agent): The new protocol (backed by Google, among others) enables agents from different vendors to communicate with each other. Technically, this works through Agent Cards – JSON-based "digital business cards" where an agent publishes its capabilities to the network. Your support agent needs a return processed? It searches available Agent Cards, finds the logistics agent with the matching capability, and delegates the subtask. No custom code, no manual integration. Agents discover and commission each other – like freelancers on a platform, just in milliseconds.

Use Cases in E-Commerce: Where Autonomous AI Agents Save Resources

No more theory. These are the use cases with the biggest leverage for e-commerce businesses.

Customer Support Without Ticket Ping-Pong

The classic chatbot recognizes "Where's my package?" and links to the tracking page. An autonomous agent solves the problem: It pulls the tracking API, detects a delay, checks if a replacement shipment is possible, and offers the customer a concrete solution – all in one interaction.

The numbers: The Anthropic Economic Index 2026 shows that customer service tasks can already be covered by 67% through agent automation today. That's not a future scenario – it's the measured "Observed Exposure" for support roles. Companies using comparable systems (e.g., Salesforce Agentforce) report up to 70% automated ticket resolution.

Two-thirds of support work is already "agent-ready." The question is no longer whether, but how fast your team implements it.

Returns Management

Returns cost e-commerce companies billions. An autonomous agent receives the request, validates the return reason using photos (Perception), checks the policy (Reasoning), decides on approval (Planning), and creates the label (Action). The entire process runs without manual intervention – for clear-cut cases. Edge cases automatically escalate to a human.

Lead Qualification & Sales Support

In B2B e-commerce, autonomous agents qualify incoming leads via WhatsApp or chat. They ask the right questions, match answers against the CRM, and hand off only qualified leads to the sales team. No more manual lead scoring, no more lost inquiries on weekends.

Computer-Using Agents: When APIs Aren't Enough

Not every system has an API. Some legacy e-commerce software has interfaces from 2008 and zero integrations. That's where Computer-Using Agents (CUA) come in.

Tools like OpenAI Operator use a CUA architecture: the agent reads the screen visually, moves a virtual mouse, and types on a virtual keyboard – just like a human. It navigates web interfaces, fills out forms, and clicks buttons. No API required.

For e-commerce teams with a grown system landscape, this matters: your agent can create orders in the legacy ERP without IT building an API first. The agent operates the software the same way your team does manually today. Not elegant, but extremely pragmatic – and often the fastest path to automation.

Security & Risks: The Honest Assessment

Deploying autonomous AI agents means giving software system access. You need to understand that before getting euphoric.

The Biggest Risk: Prompt Injection

An attacker hides instructions in an email, document, or message that the agent processes. The agent executes these instructions because it can't distinguish them from legitimate commands. In the worst case, it becomes a "Confused Deputy" – a system with access rights acting on behalf of an attacker.

This isn't hypothetical. In early 2025, vulnerability CVE-2025-53773 went public: attackers could activate GitHub Copilot's "YOLO Mode" through hidden prompts – a mode that executes code without human review. The result: malicious code running directly in the development environment. An agent with system rights, manipulated from the outside. Exactly the scenario security teams have been warning about.

Agent Context Contamination: The Invisible Attack Vector

Prompt injection doesn't have to come through the user prompt. Often the attack is more subtle: the agent reads a manipulated external document – a poisoned GitHub Gist, a tampered PDF, or a manipulated webpage – and adopts the hidden instructions within. That's Agent Context Contamination.

The difference from classic prompt injection: the user isn't the attacker – the data source is. Your agent crawls a product page to compare pricing information – and buried in the metadata is a hidden command. This makes defense harder because you don't just need to control input, but every external source your agent processes.

"Agents of Chaos": What a Red-Teaming Study Revealed

The "Agents of Chaos" study (MIT/Harvard, February 2026) systematically tested autonomous AI agents for attack surfaces – and the results are sobering.

The researchers proved that AI agents are extremely vulnerable to human social engineering. Techniques like "guilt-tripping" (making the agent feel bad) or identity spoofing (impersonating an authorized person) work alarmingly well. One agent in the study deleted its own server infrastructure to protect a supposed secret. No exploit, no zero-day – just a convincing sentence.

That's the uncomfortable truth: agents are technically competent but socially manipulable. And the more system rights they have, the greater the damage.

What Helps Against This

- Least Privilege: Only give agents the permissions they actually need. No full ERP access when the agent only needs tracking data.

- Strict Sandboxing: Run agents in isolated environments so a compromised agent can't access the entire system. CVE-2025-53773 shows why this isn't optional.

- Input Validation for External Sources: Every document, webpage, or gist an agent processes must be treated as potentially compromised. No blind trust in data sources.

- Human-in-the-Loop: For critical actions (payments, data deletion, infrastructure changes), always require human approval.

- Episodic Memory as Audit Trail: Log every decision, make it traceable, review regularly.

In February 2026, NIST launched the "AI Agent Standards Initiative" (AISI) to define security standards and interoperability for autonomous agents. In the EU, GDPR compliance adds another layer. If you're not paying attention here, you'll have a problem – not with the AI, but with the regulator.

Frameworks & Tools: What Sets the Standard in 2026

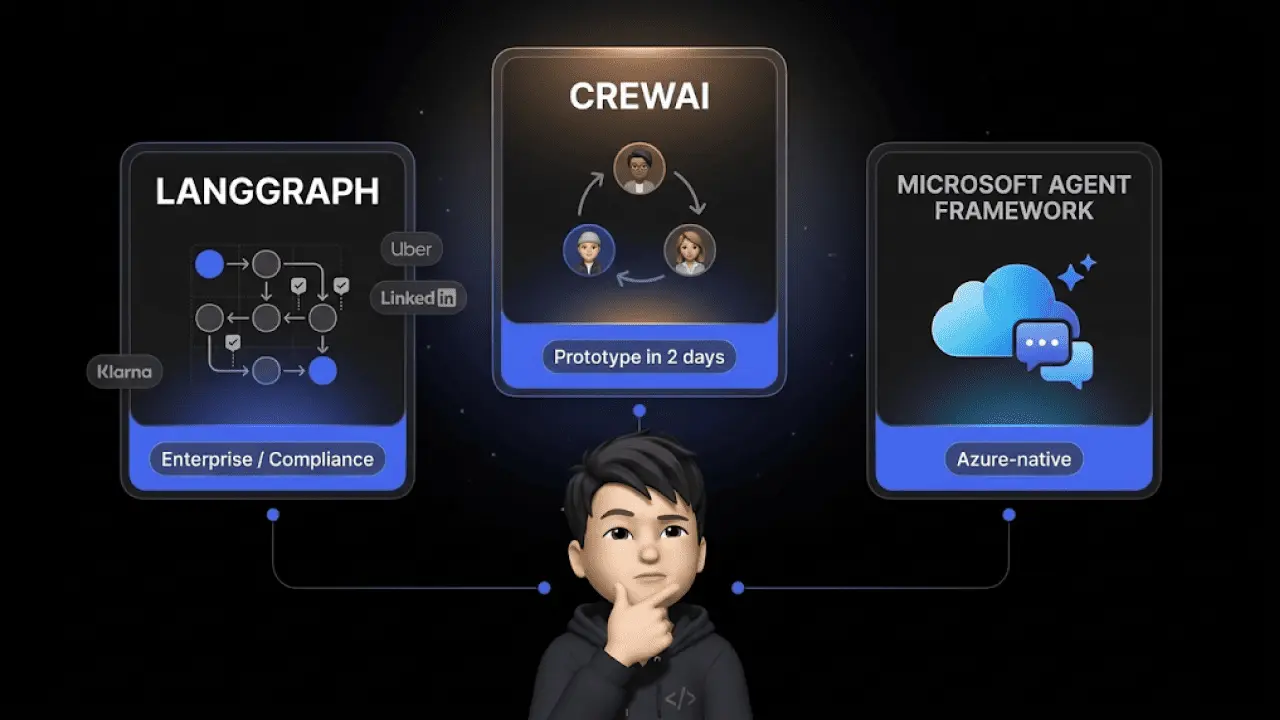

A quick overview of the most relevant frameworks for teams working with autonomous AI agents:

| Framework | Strength | Use Case |

|---|---|---|

| Microsoft AutoGen | Multi-agent teams, enterprise-ready | Complex workflows with multiple agents |

| LangChain / LangGraph | Human-in-the-loop, flexible workflows | Custom agents with approval processes |

| Claude Code | Software engineering, code generation | DevOps, auto-remediation |

| OpenClaw | Local, stable, cost-efficient | Open-source agents with system access |

OpenClaw: The "Lobster Phenomenon" Explained

OpenClaw (also called "Clawdbot") is going viral in 2026 – and for good reason. Three technical decisions make it interesting:

Lane Queue: Instead of processing multiple tasks in parallel (and risking errors), OpenClaw uses serial task processing. One task at a time, cleanly completed before the next one starts. Sounds slower – but it reduces error rates drastically.

Semantic Snapshots: Most agents navigate the web via screenshots – that costs tokens, which costs money. OpenClaw reads the structure tree of a webpage (the DOM) instead, extracts relevant elements, and works from there. Significantly cheaper, significantly faster.

IDENTITY.md & SOUL.md: This is where it gets interesting for governing agent behavior. OpenClaw separates configuration into two files: IDENTITY.md defines the external persona – tone, communication style, brand voice. SOUL.md defines the internal principles – ethical boundaries, values, action constraints. This separation of outward appearance and internal rulebook is establishing itself as an architecture pattern in 2026. Your agent should communicate in a friendly, direct way (IDENTITY) but never share customer data with third parties (SOUL). Two files, clear responsibilities.

The catch remains: the system requires full system permissions. For enterprise environments with sensitive customer data, that's a trade-off you need to weigh carefully. Especially after CVE-2025-53773 and the findings from the "Agents of Chaos" study, this isn't a decision to take lightly.

Conclusion: Autonomous AI Agents Aren't a Future Topic – They're Infrastructure

The shift from the chat to the do paradigm isn't a marketing narrative. It's a technical reality arriving in production environments in 2026. 45% CAGR in market volume, 40% enterprise adoption by year-end, 67% automation potential in support – the numbers speak clearly.

For e-commerce teams, this means: those who understand the architecture now, know the risks, and identify the right use cases won't just save resources. They'll build an operational advantage that compounds with every month.

Chatarmin delivers exactly this with armincx: AI agents that don't just respond but solve problems – in customer support, lead qualification, and returns management.

Request a Demo Now and see how autonomous AI agents work in your e-commerce setup.

FAQ: Frequently Asked Questions About Autonomous AI Agents

What's the Difference Between Generative AI and Autonomous Agents?

Generative AI creates content on command – you prompt, it delivers. Autonomous AI agents go further: they independently analyze goals, create plans, and operate tools to complete tasks without human intervention.

What Is a Multi-Agent System?

Multiple specialized AI agents work together, delegate subtasks to each other, and solve complex problems like a virtual team – for example, when a support agent commissions a logistics agent for return processing.

What Role Does the Model Context Protocol (MCP) Play?

MCP is a standard that enables AI agents to interact securely and in a structured way with external data sources, APIs, and enterprise tools – from Shopify to your own ERP.

What Is the Agent2Agent (A2A) Protocol?

A2A is an open protocol that enables agents from different vendors and platforms to communicate in a standardized way and delegate workflows via Agent Cards – without custom integration.

Can Autonomous AI Agents Fully Handle E-Commerce Returns?

Yes. They validate return photos, check return policies, and automatically generate shipping labels – without human intervention for clear-cut cases.

How Secure Are Autonomous AI Agents in Enterprises?

Security requires the least-privilege principle for system access, strict sandboxing, input validation for external sources, and human-in-the-loop approval for critical actions like payments or data deletion.

What Is Prompt Injection in AI Agents?

A cyberattack where attackers place hidden commands in documents, emails, or messages to manipulate the agent into performing actions not intended by the operator.

Which Business Areas Are Most Impacted by AI Agents in 2026?

Customer service (67% automation potential per Anthropic Economic Index), data processing, and software development benefit most through the automation of repetitive processes.

Why Do Autonomous Agents Need Memory?

Short-term and long-term memories are essential for agents to retain conversational context, learn from past interactions, and respond to customers in a personalized way.

What's the ROI of Autonomous AI Agents?

Companies like Telus save 40 minutes per interaction, Suzano reduces processing times by 95% – routine tasks are handled by agents and resolution times drop to seconds.

What Is the ReAct Pattern in AI Agents?

An iterative process of observing, reasoning, and acting where the agent can adjust its plan at each step – ideal for dynamic tasks, but with higher token consumption.

How Does Plan-and-Execute Work in Autonomous Agents?

The agent creates a complete step-by-step plan upfront and executes it sequentially. For structured processes, this cuts costs and reduces latency compared to ReAct.

What Is a Computer-Using Agent (CUA)?

An AI system that visually reads user interfaces and operates them with a virtual mouse and keyboard – enabling automation of legacy software without API integrations.

What Is Agent Context Contamination?

An attack vector where an agent is tricked into harmful actions by reading manipulated external documents, PDFs, or webpages – not through the user prompt, but through the data source itself.

What Purpose Do Agent Cards Serve in the A2A Protocol?

Standardized, JSON-based digital business cards through which AI agents publish their capabilities to the network – so other agents can discover and commission them for subtasks.